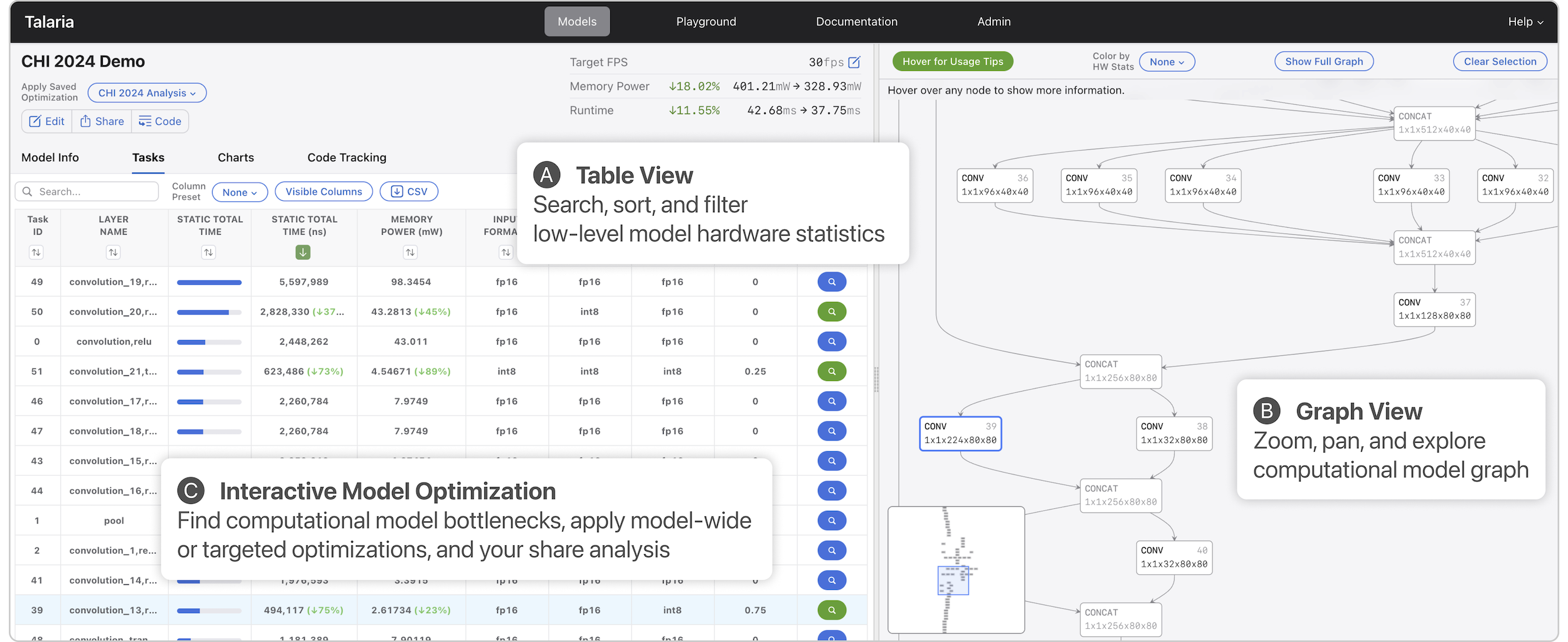

Talaria: Interactively Optimizing Machine Learning Models for Efficient Inference

Abstract

On-device machine learning (ML) moves computation from the cloud to personal devices, protecting user privacy and enabling intelligent user experiences. However, fitting models on devices with limited resources presents a major technical challenge: practitioners need to optimize models and balance hardware metrics such as model size, latency, and power. To help practitioners create efficient ML models, we designed and developed Talaria: a model visualization and optimization system. Talaria enables practitioners to compile models to hardware, interactively visualize model statistics, and simulate optimizations to test the impact on inference metrics. Since its internal deployment two years ago, we have evaluated Talaria using three methodologies: (1) a log analysis highlighting its growth of 800+ practitioners submitting 3,600+ models; (2) a usability survey with 26 users assessing the utility of 20 Talaria features; and (3) a qualitative interview with the 7 most active users about their experience using Talaria.

BibTeX

@inproceedings{hohman2024talaria,

title={{Talaria: Interactively Optimizing Machine Learning Models for Efficient Inference}},

author={Hohman, Fred and Wang, Chaoqun and Lee, Jinmook and Görtler, Jochen and Moritz, Dominik and Bigham, Jeffrey P. and Ren, Zhile and Foret, Cecile and Shan, Qi and Zhang, Xiaoyi},

booktitle={Proceedings of the SIGCHI Conference on Human Factors in Computing Systems},

year={2024},

organization={ACM},

doi={10.1145/3613904.3642628}

}